![[NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT [NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT](https://cdn.slidesharecdn.com/ss_thumbnails/new-launch-introducing-amaz-dc7595e2-98da-40f8-aaa2-895420541d29-457215190-181202043444-thumbnail.jpg?width=640&height=640&fit=bounds)

[NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT

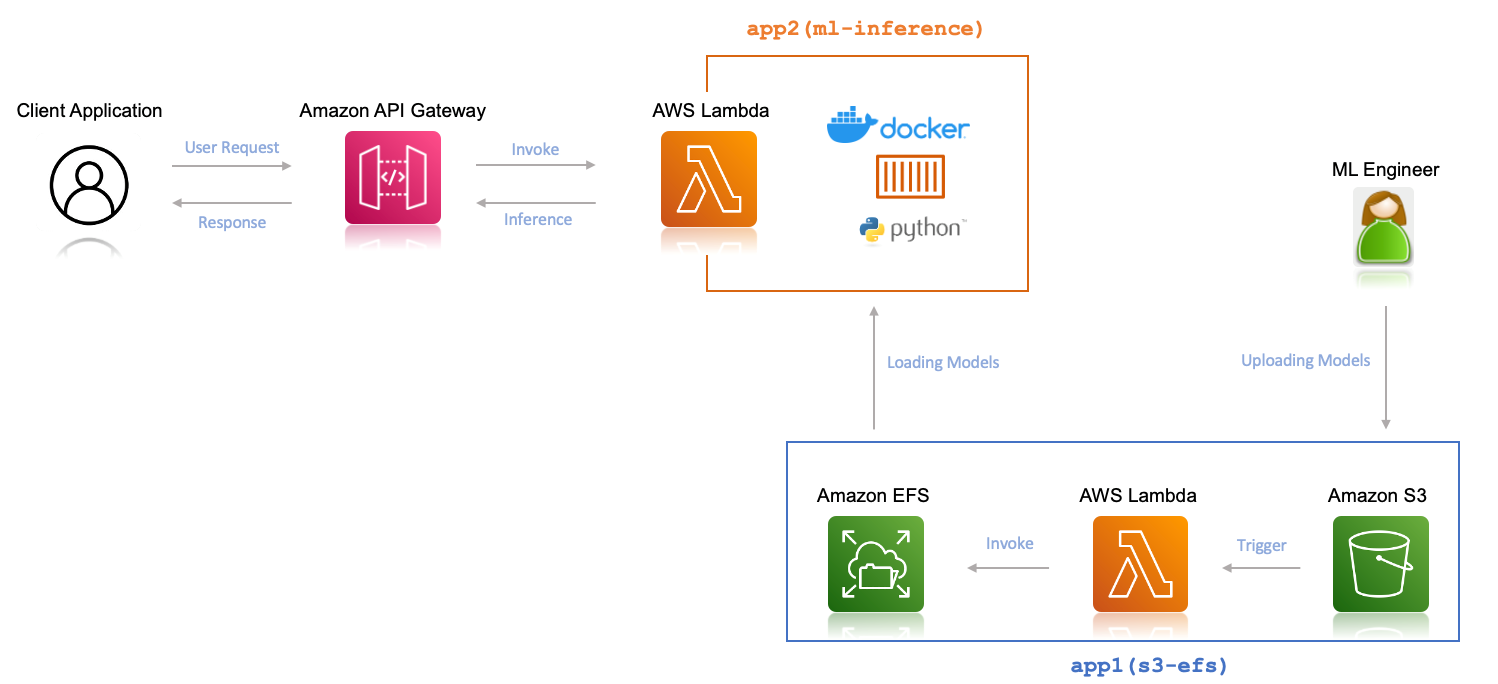

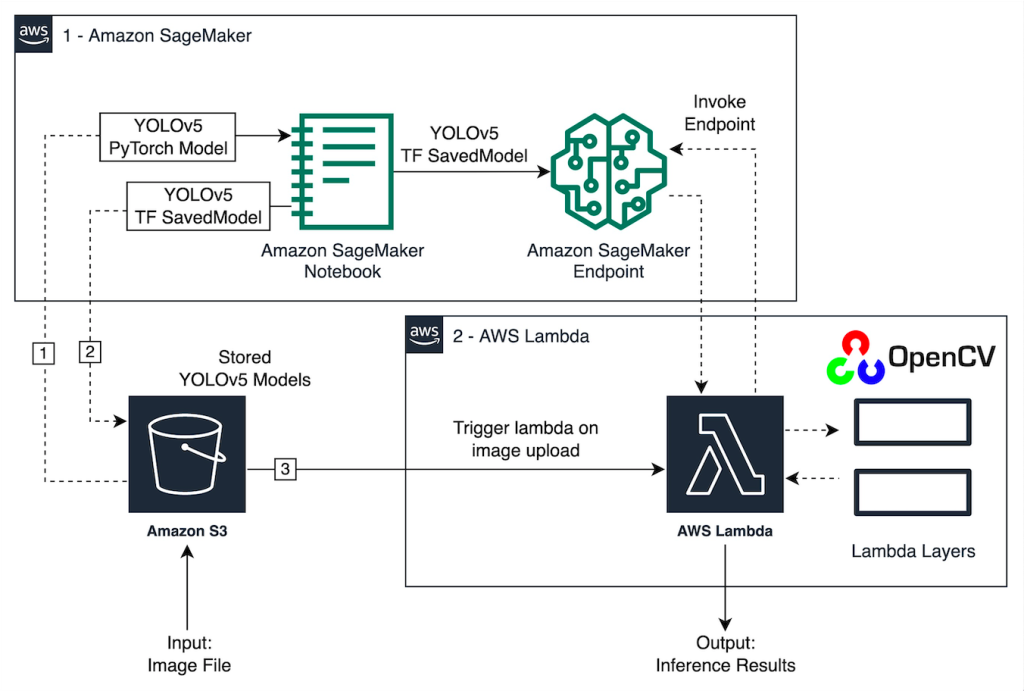

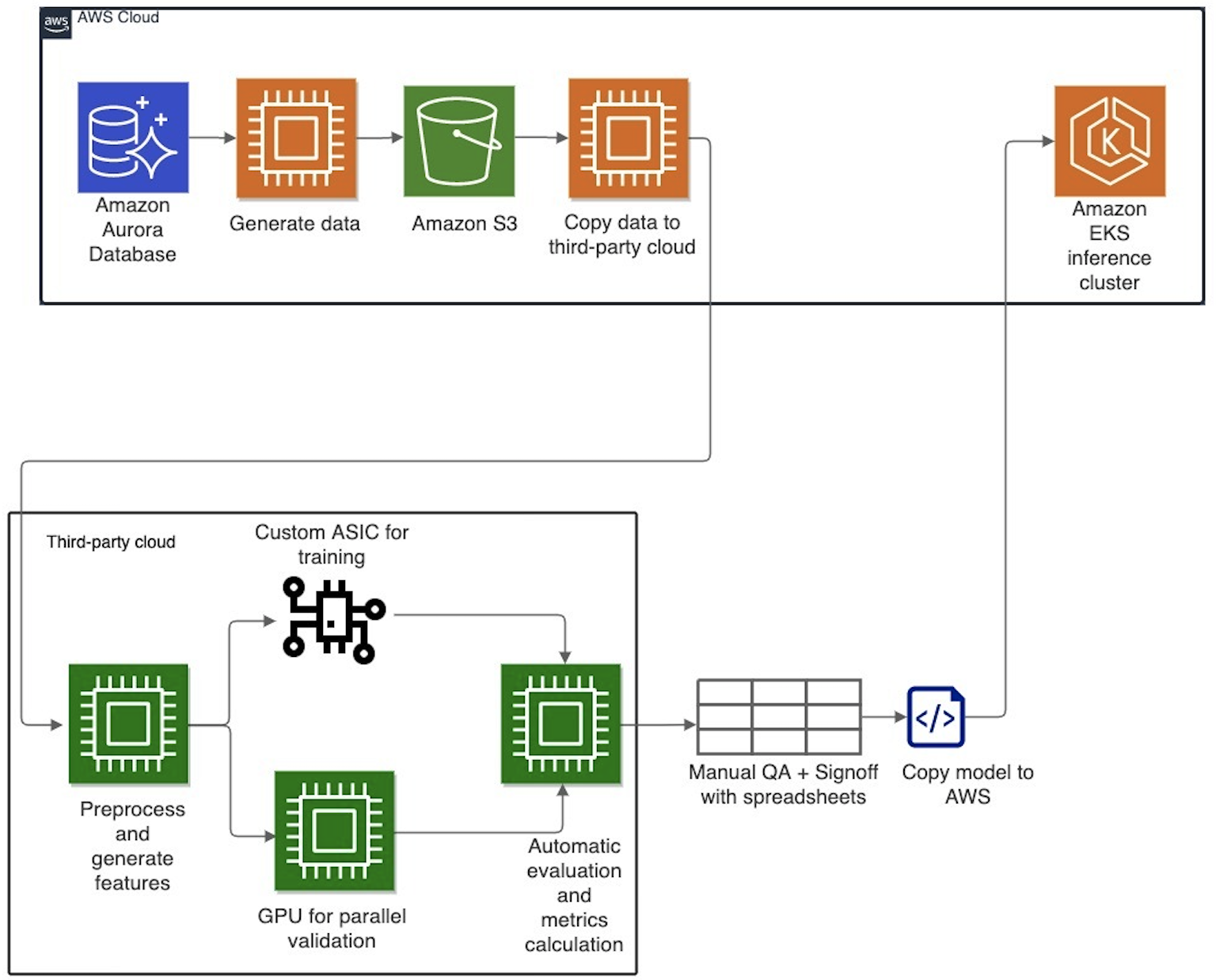

Evolution of Cresta's machine learning architecture: Migration to AWS and PyTorch | Data Integration

Using Fewer Resources to Run Deep Learning Inference on Intel FPGA Edge Devices | AWS Partner Network (APN) Blog

Improve high-value research with Hugging Face and Amazon SageMaker asynchronous inference endpoints | AWS Machine Learning Blog

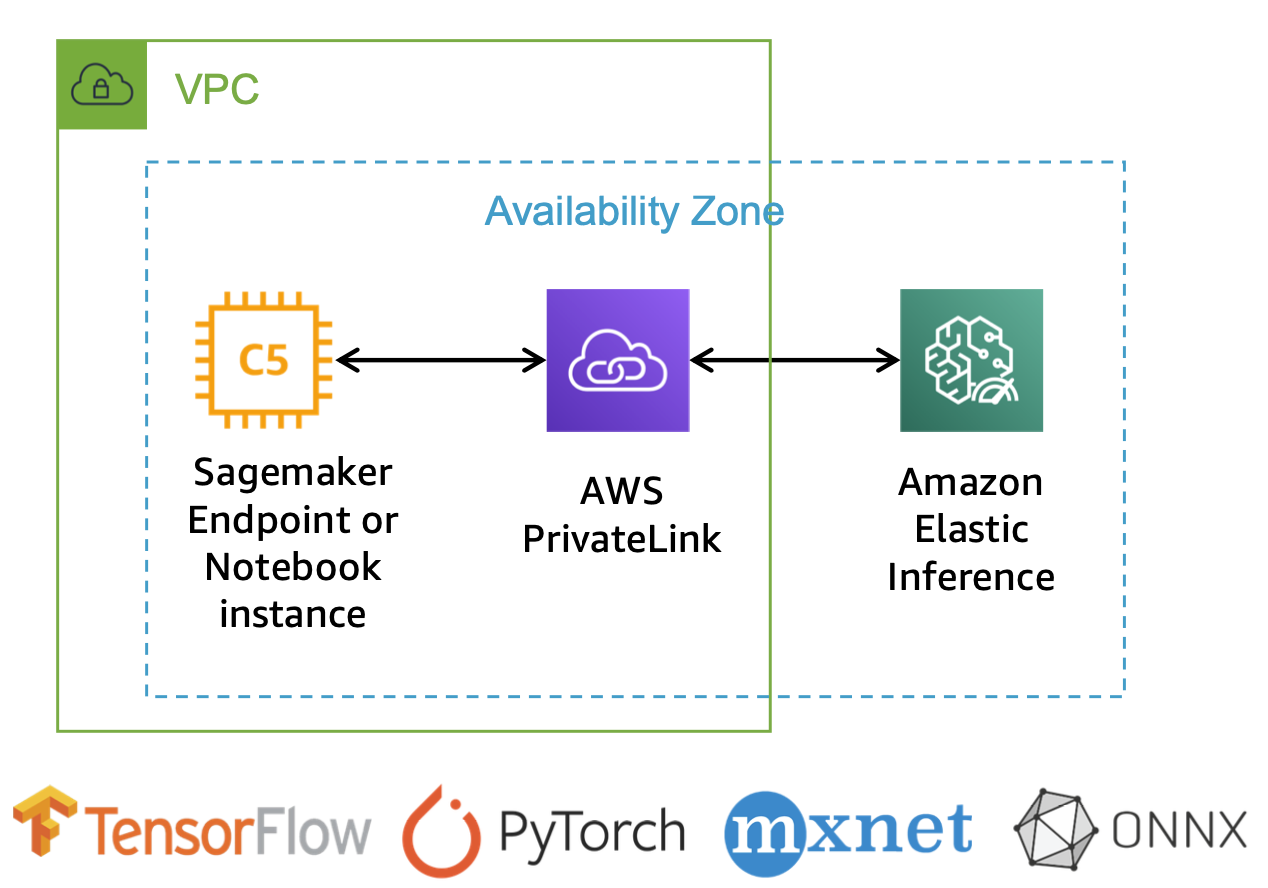

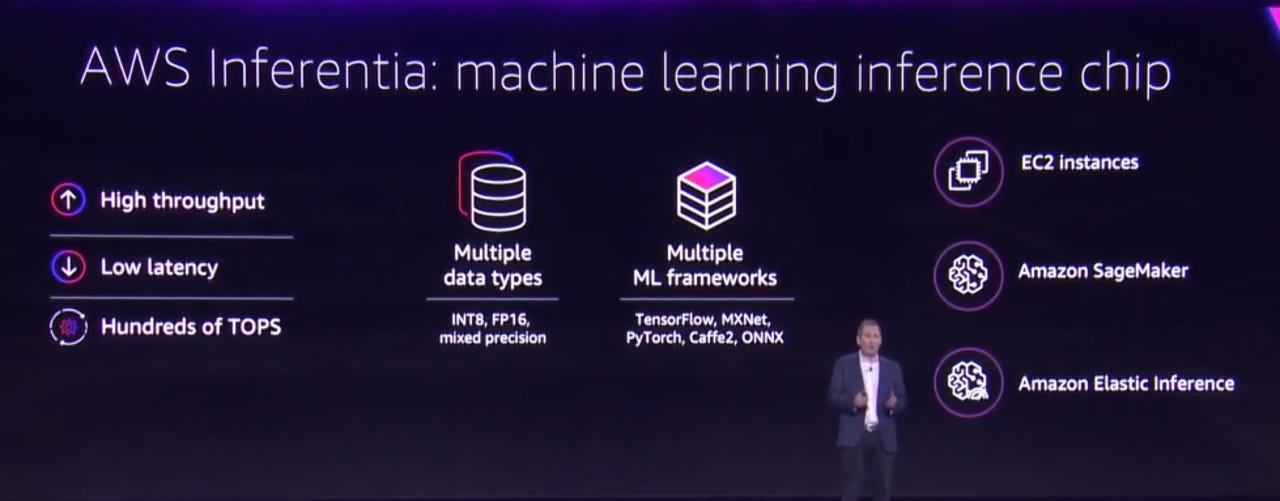

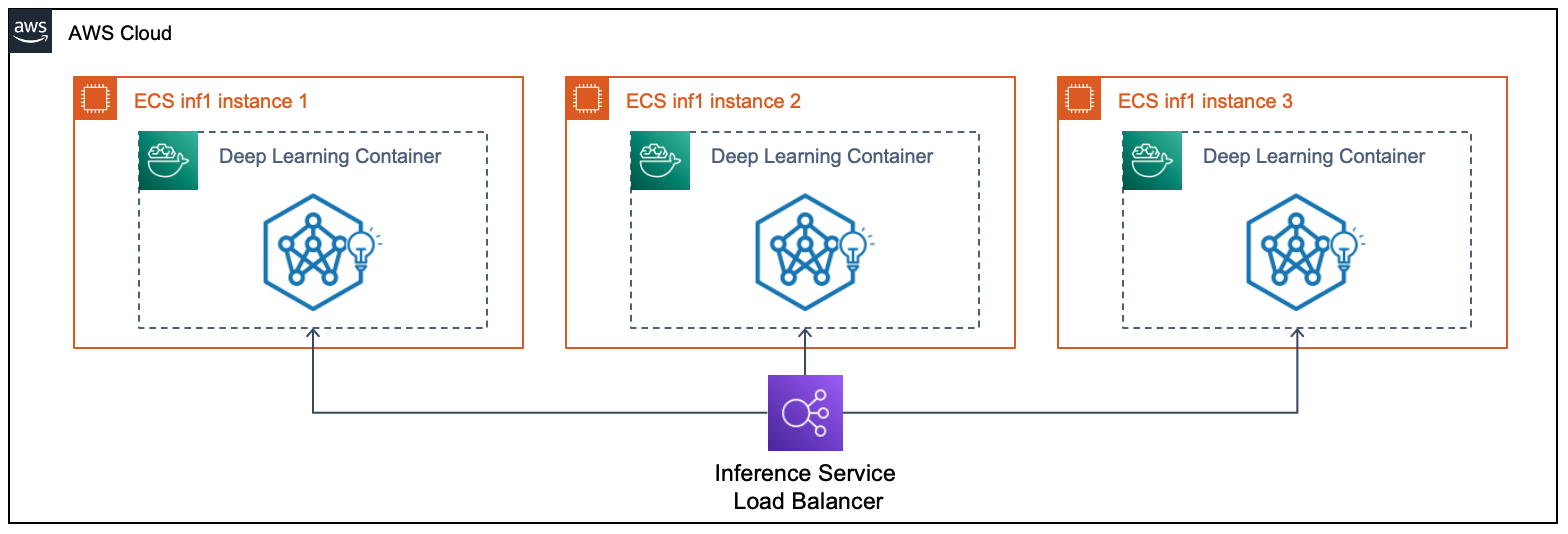

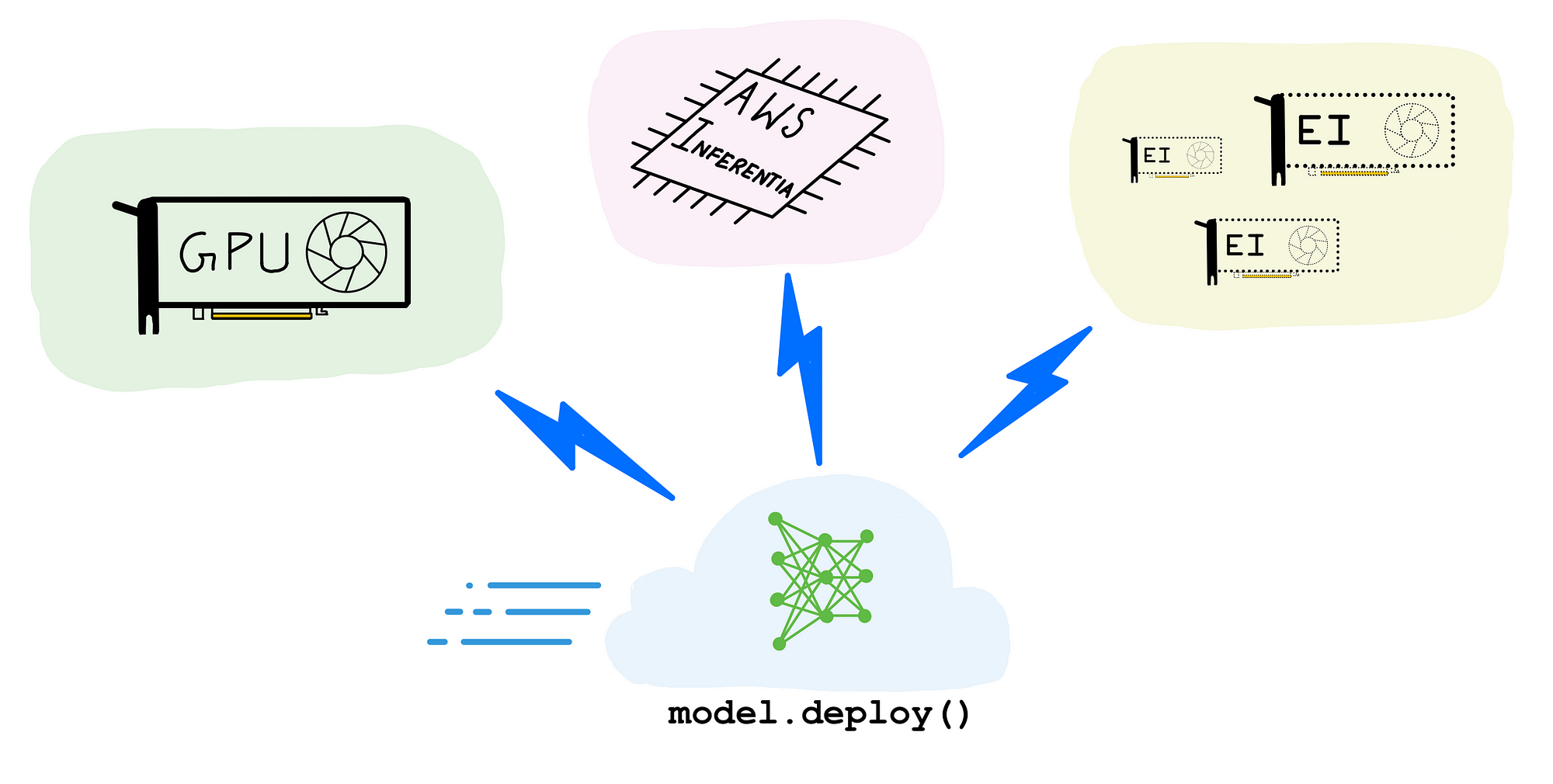

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science